Sequential Record Compilation for 222666521, 6948258351, 8777708065, 604382337, 5412369435, 84999401830

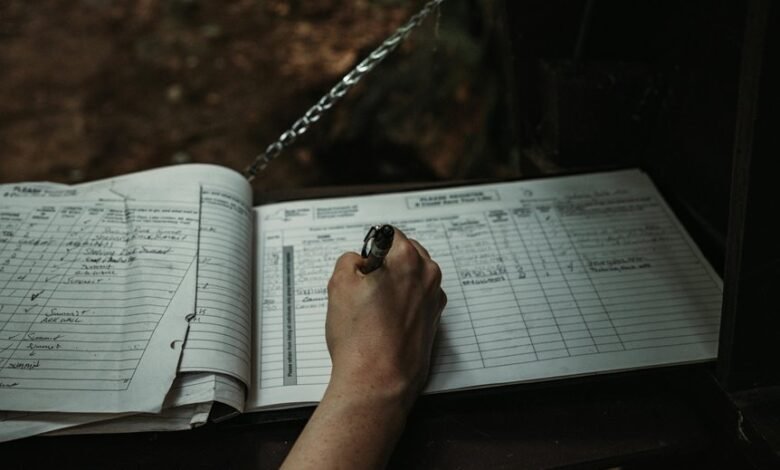

The compilation of sequential records for the numbers 222666521, 6948258351, 8777708065, 604382337, 5412369435, and 84999401830 presents a critical opportunity for enhanced data organization. This structured approach aids in improving accessibility and relevance. By applying systematic methodologies, one can facilitate more efficient data retrieval. However, the implications of such organization extend beyond mere accessibility, raising questions about the underlying methodologies that drive accuracy and integrity in data management.

Understanding the Importance of Sequential Record Compilation

The practice of sequential record compilation serves as a crucial framework for organizing data systematically.

By establishing a methodical approach, it enhances data relevance and ensures that each entry retains record significance.

This structured organization not only facilitates easier access to information but also empowers individuals to make informed decisions, fostering an environment where freedom of thought and action can thrive through clarity and understanding.

Methodologies for Organizing Large Data Sets

While various methodologies exist for organizing large data sets, selecting the appropriate framework is essential for maximizing efficiency and retrieval accuracy.

Effective data sorting and record indexing enhance storage solutions, allowing for improved retrieval strategies.

Additionally, employing robust analysis techniques and data visualization methods can facilitate meaningful insights, ultimately empowering users to navigate and utilize vast data landscapes with greater freedom and clarity.

Leveraging Technology for Efficient Data Management

Harnessing advanced technology is crucial for optimizing data management practices across various sectors.

Data visualization techniques enable stakeholders to interpret complex datasets effectively, facilitating informed decision-making.

Furthermore, automation tools streamline repetitive tasks, increasing efficiency and reducing human error.

Best Practices for Maintaining Data Integrity and Accuracy

Maintaining data integrity and accuracy is essential for organizations aiming to make informed decisions based on reliable information.

Implementing robust data validation processes ensures that incoming data meets predefined standards. Additionally, establishing systematic error detection mechanisms can identify inconsistencies and anomalies early, safeguarding the quality of the dataset.

These practices foster a culture of accuracy, empowering organizations to utilize their data effectively and confidently.

Conclusion

In the grand garden of data, sequential record compilation acts as a diligent gardener, meticulously arranging each plant to ensure optimal growth and visibility. By employing structured methodologies and leveraging technology, the garden flourishes, with each entry contributing to the overall landscape. Through best practices that nurture data integrity and accuracy, the garden not only provides sustenance for informed decision-making but also invites exploration, allowing the beauty of the dataset to be fully appreciated and understood.